World models vs Context graphs Ground Truth

Marrying prediction with provable provenance so platforms act correctly and match ground reality

TECHNOLOGY

When an AI suggests a course of action on the plant floor, the operator’s first thought is simple: can I trust this?

Modern research world models — projects such as Sora and Genie 3 — have pushed AI’s ability to imagine futures, run rich simulations, and propose remediation strategies. They expand what’s possible. But imagination without provenance is a liability in operations. A recommendation that cannot be tied back to the moment’s facts will be ignored or, worse, cause harm.

That is why operational systems need a complementary layer: a situational database — a living, auditable record of the moment’s reality and the decisions that followed. World models propose; the situational database validates. Together they let a platform act quickly and defensibly.

Foresight versus provenance

World models are engines of foresight. They synthesize patterns, interpolate missing data, and explore “what if” scenarios at scale. They help teams stress‑test policies and surface options that humans might miss.

Situational databases are engines of trust. They consolidate the signals that matter in the moment — field reports, authoritative records, and decision history — into a single, queryable view. That provenance answers operational questions: What happened? Who decided? What constraints apply right now? Without that context, even the best simulation is just a hypothesis.

The real power comes when these layers are fused: a world model suggests a remediation, the situational database verifies constraints and provenance, and the platform acts only when the recommendation aligns with the ground truth.

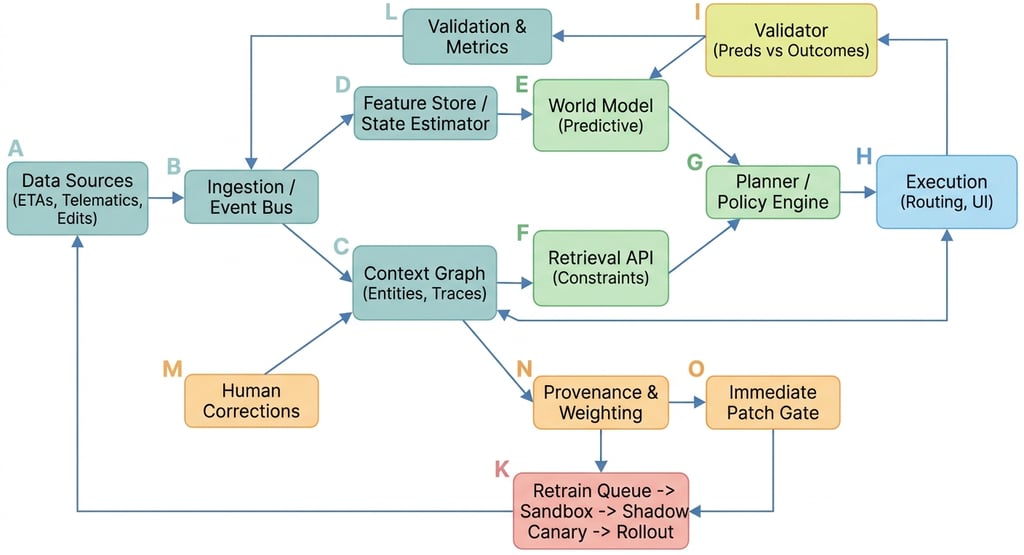

How it works:

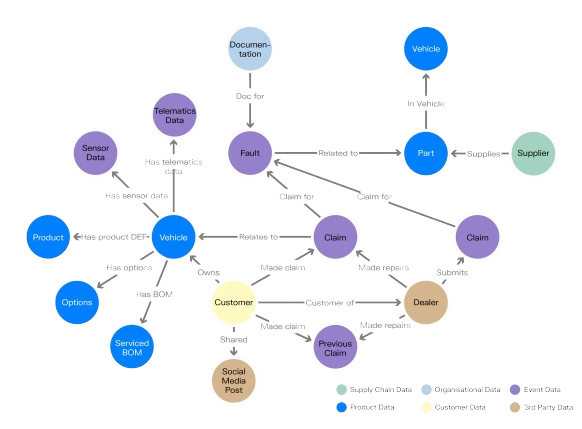

Context Graph stores who, what, when, and why — carrier records, pickup confirmations, decision traces, and correction provenance. It updates in real time and answers retrieval queries for the planner.

World Model predicts outcomes (ETA variance, pickup success, cost impact). It uses features from the state estimator and is used for planning and counterfactuals.

Planner asks the Context Graph for constraints and the World Model for predicted outcomes, then issues actions to execution.

Validator compares predictions to real outcomes recorded in the Context Graph to detect drift and trigger retrains.

Provenance & Weighting tags human corrections by role and recency; high‑trust corrections can trigger immediate patches, others go to the retrain queue and follow sandbox → shadow → canary → rollout.

How grounding makes imagination useful

A world model might recommend releasing inventory to meet an urgent order. Before anyone touches the release button, operations need to know whether the batch in question passed QC, whether the PO is committed, whether the goods are already in transit, and whether any prior hold or override exists. Enrichment against authoritative sources and a recorded decision trail turn a speculative suggestion into a defensible action.

A situational database is not a silver bullet. It depends on the quality of the signals it ingests and the care taken to validate them. Context around an exception — who reported it, what else is happening, what prior decisions exist — is the multiplier that turns a correct detection into a safe action.

Let's take an example

At a mid‑sized electronics manufacturer, a line operator sent a terse message during a morning shift: “Batch X-77 showing delamination in first run. Holding line.” The message was urgent but incomplete. Left unvalidated, an automated stop‑ship could have delayed several customer shipments and triggered costly expedites.

Instead, the operations platform followed a disciplined flow. It matched the reported batch to the active POs, checked whether any of the affected SKUs were already on trucks, and surfaced the batch’s recent QC history. The system found that only a small subset of modules used X‑77, and none were yet in transit; it also found a prior, similar incident three months earlier that had been resolved by a targeted rework rather than a full recall.

Armed with that situational snapshot, the platform ran a short simulation to estimate customer impact for both a full stop and a targeted containment. The recommended action — a temporary hold on the affected line segment plus immediate sampling and expedited QC — was routed to QA and the plant manager with the exact POs, quantities, and precedent cited. The human approver accepted the recommendation within minutes, the containment prevented further defects, and the company avoided unnecessary shipment delays.

That outcome depended on two things: the world model’s ability to simulate impact scenarios and the situational layer’s ability to validate the facts and cite precedent. One without the other would have been either reckless or slow.

Why the fusion matters now

Research world models like Sora and Genie 3 are expanding what AI can imagine. That progress is exciting and useful for planning and simulation. But in live operations, the cost of a mistaken action is high. When world models are anchored to a situational database, three things happen: false positives fall because recommendations are validated; trust rises because every suggestion cites provenance; and response time improves because the platform already understands how to act in context.

This is not about exposing proprietary mechanics or promising flawless automation. It is about combining predictive reach with provable provenance so teams can act faster and with confidence.

Conclusion

World models give reach and imagination; situational databases give provenance and trust. The future of trustworthy automation is not choosing one over the other but fusing both: let world models explore possibilities, and let a situational layer ensure those possibilities map to the moment’s reality before anyone acts. That fusion is the operational difference between firefighting and foresight.