Designing Human-In-the-Loop (HITL) systems for accountability

Should human review be limited to high‑impact or low‑confidence cases, with mandatory concise provenance and rationale?

TECHNOLOGY

Design HITL for accountability, not busywork

Human‑in‑the‑loop is sold as the safety valve of automation: keep a person watching and nothing catastrophic will happen. In practice that binary thinking breaks systems. Too much human involvement turns automation into a paper tiger — every alert becomes manual triage, throughput collapses, and teams accumulate low‑value work. Too little oversight hands control to brittle models that can hallucinate or miss context only humans see. The real question is not whether to include people, but how to include them so their time is spent on judgment, not busywork.

Think in terms of outsized versus mundane work. Outsized tasks are single decisions with large consequences — stop‑ship calls, recalls, contract breaches, or choices that can cost millions or endanger safety. Mundane tasks are high‑volume, low‑impact checks: routine reconciliations, confirmations, or repetitive triage.

The design rule is simple: automate the mundane; route the outsized. Use measurable signals — confidence scores, provenance checks, and impact estimates — to decide which side a case falls on.

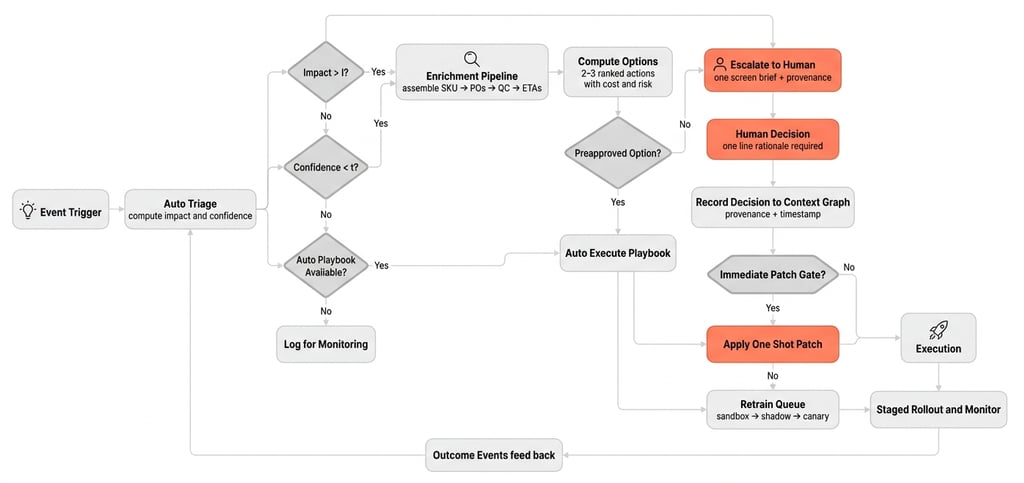

Let's go deeper with a flow diagram

Below is a minimalist HITL routing flow that combines Auto Triage → Enrichment → Option Generation → Human Escalation → Learning Path. The visual shows two gates — Impact and Confidence — that decide whether to enrich and escalate, and a provenance gate that decides immediate patches versus retrain.

Core components and what they do

Event Trigger Ingests alerts from sensors, customer calls, exception rules, and operator edits.

Auto Triage Computes a quick Impact Score and Confidence Score to decide routing.

Enrichment Pipeline Automatically assembles authoritative context: SKU → POs → inventory → QC holds → carrier ETAs → customs flags → recent decision traces.

Option Generator Produces 2–3 ranked actions with cost, ETA, risk, and the single missing fact to resolve.

Human Escalation One Screen Brief Compact UI showing options, affected entities, provenance, and a required one‑line rationale.

Context Graph Records decisions, provenance, timestamps, and data lineage for explainability and training.

Immediate Patch Gate and Retrain Queue Provenance and trust rules decide whether to apply a one‑shot patch or queue corrections for sandbox retrain, shadow test, canary, and rollout.

Validator and Metrics Loop Compares predictions to outcomes, triggers drift alerts, and closes the learning loop.

Now, lets overlay a real-life Supply Chain use case on this flow diagram

Assume, a critical component must arrive in 24 hours or a major customer line stops. The orchestration system must decide whether to reroute an in‑transit pallet by air, pull from a nearby DC, or reallocate from a lower‑priority order.

Event Trigger

Customer support logs an urgent ticket; telematics show a delay at port.

Auto Triage

Compute Impact Score = downtime cost per hour × expected hours of delay.

Compute Confidence Score from inventory visibility, PO status, and customs clearance certainty.

Gate decision

If Impact > I or Confidence < t, route to Enrichment Pipeline. Otherwise, if a preapproved playbook exists, auto execute the playbook.

Enrichment Pipeline assembles

SKU → linked POs and DCs.

Inventory counts and lead times at nearby DCs.

QC holds or PO on‑hold flags.

Carrier ETAs, telematics, customs clearance status.

Recent overrides and who made them from the context graph.

Option Generation

Option A Air freight from current carrier cost $X ETA +6 hours risk customs delay.

Option B Pull from DC 42 cost $Y ETA +10 hours risk QC hold.

Option C Reallocate from lower priority order cost $Z ETA +8 hours risk customer impact.

Mark options preapproved if within budget and policy.

Preapproval check

If a preapproved option exists and confidence is sufficient, auto execute.

If not, present a one‑screen brief to a human with: headline, top 2 options, cost delta, affected POs, missing fact (e.g., QC hold clearance), provenance (who flagged, timestamps), and action buttons.

Human decision

Human picks an option or supplies the missing fact. They enter a one‑line rationale that is recorded to the context graph.

Learning path

If the human is a trusted ops lead and telemetry corroborates the issue, the Immediate Patch Gate may apply a one‑shot rule (for example deprioritize Carrier X on weekend pickups).

Otherwise the correction goes to the Retrain Queue for sandbox retrain, shadow testing, and canary rollout.

Validation

After execution, outcomes feed back to the validator which compares predicted vs actual ETA, cost, and SLA impact. Drift alerts trigger retrain if errors exceed thresholds.

If you’re designing HITL interventions, here are implementable nuggets to apply:

Define outsized thresholds. Quantify high impact in dollars, safety risk, or SLA breach probability and use that to gate escalation.

Automate enrichment. Assemble linked orders, QC status, carrier ETAs, and customs flags before routing to a person.

Route by confidence + impact. Only escalate when (\text{confidence} < t) or (\text{impact} > I); tune (t) and (I) from real incidents.

Deliver one‑screen briefs. Give humans 2–3 options, exact costs, affected POs, and the single missing fact to resolve.

Make humans accountable, not busy. Require a short rationale and record the decision as training data.

Preserve skill with drills. Schedule simulated takeovers so operators stay sharp.

Measure human cost. Track time per escalation, decision latency, and avoided downtime to optimize thresholds.

This approach aligns with decades of human‑factors and hybrid‑intelligence thinking: automation should not deskill operators nor create endless verification work. When HITL is designed well, people stop being the bottleneck and become the system’s most valuable asset — the ones who teach, audit, and take responsibility when it matters.